· Martin Feldkircher, Márton Kardos, Petr Koráb · Topic Modelling · 1 min read

Topic Modeling Techniques for 2026: Seeded Modeling, LLM Integration, and Data Summaries

Seeded topic modeling, integration with LLMs, and training on summarized data are the fresh parts of the NLP toolkit.

Introduction

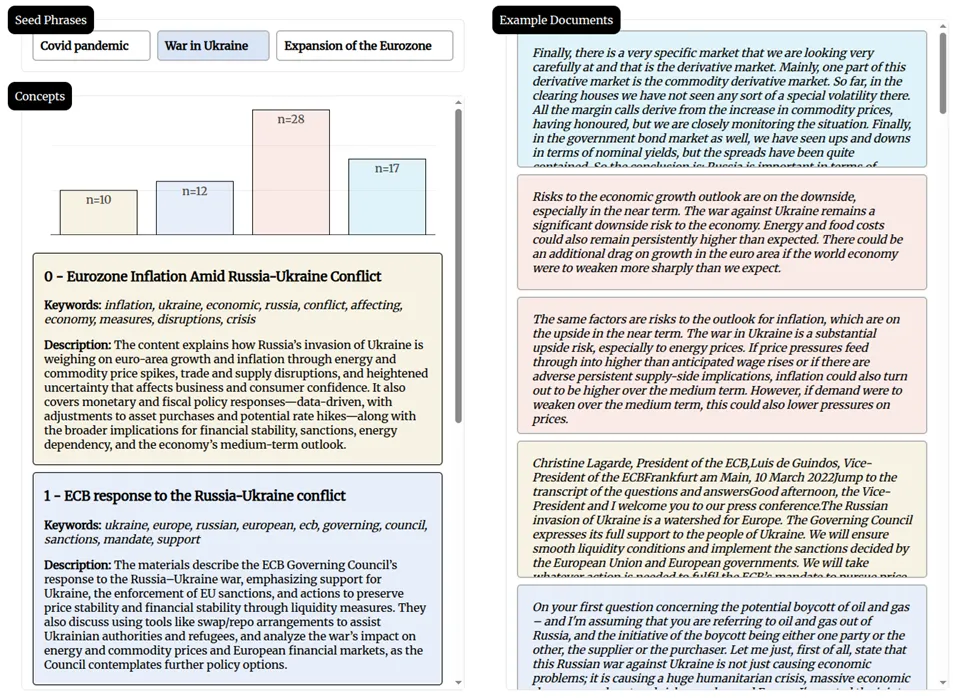

Topic modeling has recently progressed in two directions. The improved statistical methods stream of Python packages focuses on more robust, efficient, and preprocessing-free models, producing no junk distinct topics (e.g., FASTopic). The other relies on the power of generative language models to extract intuitively understandable topics and their descriptions (e.g., TopicGPT, LlooM).

In our recent Towards Data Science article, you will learn:

- How to use text prompts to specify what topic models should focus on (i.e., seeded topic models).

- How LLM-generated summaries can make topic models more accurate.

- How generative models can be used to label topics and provide their descriptions.

- How these techniques can be used to gain insights from central banking communication.